Showing posts with label SQL Server 2008. Show all posts

Showing posts with label SQL Server 2008. Show all posts

Tuesday, August 28, 2012

SQL Saturday 149 Announcement

I found out that my submission for SQL Saturday 149 was accepted and I will again be presenting at the MN SQL Saturday this year.

If you haven't signed up yet and you will be in the Twin Cities MN area on Saturday September 29th, 2012, you are missing out on one of the best SQL Server conferences around. This year, the event will be held at the University of Minnesota - Keller Hall, 200 Union Street SE, Minneapolis, MN 55455. Admittance to this event is free, but they do charge a lunch fee of 10.00 so that they can provide a lunch - not pizza! Please register soon as seating is limited, and let friends and colleages know about the event.

You can find out more about this (and register!!) at the event homepage: http://www.sqlsaturday.com/149/eventhome.aspx

Friday, April 20, 2012

Extended Event - Check for Log Shrink and Email

I saw SQL Server MVP Jason Strate give a presentation a year or two ago on Extended Events. One situation I remember him giving was that he was having problems with a log file shrinking every so often; for some reason this caused some issues (auto-grow or something). So he used Extended Events to capture the shrink and get more details on it. This problem sounded interesting to me and I wanted to see if I could generate some code to do this from scratch, this is what I came up with:

First we need to create the Extended Event (hereafter referred to as XE) to capture the log shrink:

Next to check it, we need to shrink a log (I'll auto grow it again, so I can redo this numerous times if I need to)

Lets check the XE to see if we captured the event:

So, we should see the event now. But lets take it a step further, lets say we want to get emailed when it happens. I've created this stored proc which will check once an hour (this is a variable, you can configure it as you see fit, but keep it under 2 hours or else you'll have to modify my waitfor logic...or you could remove the waitfor logic and just run it from the scheduler in the agent job you setup, just match up the @How_Often variable with how often your job fires)

'

END

ELSE

PRINT 'No Shrinks in the time frame requested'

--PRINT @SQL

EXEC msdb.dbo.sp_send_dbmail

@recipients = '[Your Email Here]',

@body = @SQL,

@subject = 'Log Shrink Detected',

@profile_name = '[Your Profile Name Here]',

@body_format = 'html';

DROP TABLE #TempData

DECLARE @Delay VARCHAR(10)

--,@How_Often INT = 60

IF @How_Often < 60

SET @Delay = '00:' + RIGHT('00' + CAST(@How_Often AS VARCHAR(2)),2) + ':00'

ELSE

SET @Delay = '01:' + RIGHT('00' + CAST(@How_Often-60 AS VARCHAR(2)),2) + ':00'

WAITFOR DELAY @Delay

GOTO Start_Code

END

GO

Then the last step is to call this proc from a agent job. Iif you use the WaitFor logic, I'd still set a schedule to run every hour, just in case it fails...but the more I think about this, the more I prefer not using the WaitFor logic and just running the job every hour; but if the job fails, you won't get emailed.

--Eric

First we need to create the Extended Event (hereafter referred to as XE) to capture the log shrink:

IF EXISTS(SELECT * FROM sys.server_event_sessions WHERE name = 'XE_Log_Shrink')

DROP EVENT SESSION XE_Log_Shrink ON SERVER

GO

CREATE EVENT SESSION XE_Log_Shrink ON SERVER -- Session Name

ADD EVENT sqlserver.databases_log_shrink -- Event we want to capture

(

ACTION -- What contents to capture

(

sqlserver.client_app_name

,sqlserver.client_hostname

,sqlserver.nt_username

,sqlserver.session_id

,sqlserver.sql_text

,sqlserver.username

)

)

ADD TARGET package0.ring_buffer WITH (MAX_DISPATCH_LATENCY = 1 SECONDS, STARTUP_STATE = ON) -- The target

GO

ALTER EVENT SESSION XE_Log_Shrink ON SERVER STATE = START

Next to check it, we need to shrink a log (I'll auto grow it again, so I can redo this numerous times if I need to)

USE [AdventureWorks]

GO

DBCC SHRINKFILE (N'AdventureWorks_Log' , 1)

GO

USE [master]

GO

ALTER DATABASE [AdventureWorks] MODIFY FILE ( NAME = N'AdventureWorks_Log', SIZE = 100MB )

GO

Lets check the XE to see if we captured the event:

;WITH Data

AS (

SELECT CAST(target_data AS XML) AS TargetData

FROM sys.dm_xe_session_targets dt

JOIN sys.dm_xe_sessions ds ON ds.address = dt.event_session_address

JOIN sys.server_event_sessions ss ON ds.Name = ss.Name

WHERE dt.target_name = 'ring_buffer'

AND ds.Name = 'XE_Log_Shrink'

)

SELECT DATEADD(hour,-5,XEventData.XEvent.value('(@timestamp)[1]', 'datetime')) AS event_timestamp

,XEventData.XEvent.value('@name', 'varchar(4000)') AS event_name

,DB_NAME(XEventData.XEvent.value('(data/value)[2]', 'VARCHAR(100)')) AS DatabaseName

,XEventData.XEvent.value('(action/value)[2]', 'VARCHAR(512)') AS client_hostname

,XEventData.XEvent.value('(action/value)[3]', 'VARCHAR(512)') AS nt_username

,XEventData.XEvent.value('(action/value)[6]', 'VARCHAR(512)') AS username

,XEventData.XEvent.value('(action/value)[5]', 'VARCHAR(512)') AS sql_text

,XEventData.XEvent.value('(action/value)[4]', 'VARCHAR(512)') AS session_id

,XEventData.XEvent.value('(action/value)[1]', 'VARCHAR(512)') AS client_app_name

FROM Data d

CROSS APPLY TargetData.nodes('//RingBufferTarget/event') AS XEventData ( XEvent )

So, we should see the event now. But lets take it a step further, lets say we want to get emailed when it happens. I've created this stored proc which will check once an hour (this is a variable, you can configure it as you see fit, but keep it under 2 hours or else you'll have to modify my waitfor logic...or you could remove the waitfor logic and just run it from the scheduler in the agent job you setup, just match up the @How_Often variable with how often your job fires)

USE master

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

-- ===========================================================

-- Author: Eric Zierdt

-- Create date: 4/19/2012

-- Description: Checks for Log Shrinkage and sends email

-- URL: http://ericemployed.blogspot.com

-- Usage: exec Email_On_Log_Shrinkage 60

-- ===========================================================

ALTER PROCEDURE Email_On_Log_Shrinkage

-- Add the parameters for the stored procedure here

@How_Often INT = 60

AS

BEGIN

SET NOCOUNT ON

Start_Code:

--DECLARE @How_Often INT = 60

DECLARE @RowCount INT = 0

,@SQL VARCHAR(MAX) = ''

IF EXISTS (SELECT * FROM tempdb.dbo.sysobjects WHERE ID = OBJECT_ID(N'tempdb..#TempData'))

BEGIN

DROP TABLE #TempData

END

;WITH Data

AS (

SELECT CAST(target_data AS XML) AS TargetData

FROM sys.dm_xe_session_targets dt

JOIN sys.dm_xe_sessions ds ON ds.address = dt.event_session_address

JOIN sys.server_event_sessions ss ON ds.Name = ss.Name

WHERE dt.target_name = 'ring_buffer'

AND ds.Name = 'XE_Log_Shrink'

)

SELECT DATEADD(hour,-5,XEventData.XEvent.value('(@timestamp)[1]', 'datetime')) AS event_timestamp

,XEventData.XEvent.value('@name', 'varchar(4000)') AS event_name

,DB_NAME(XEventData.XEvent.value('(data/value)[2]', 'VARCHAR(100)')) AS DatabaseName

,XEventData.XEvent.value('(action/value)[2]', 'VARCHAR(512)') AS client_hostname

,XEventData.XEvent.value('(action/value)[3]', 'VARCHAR(512)') AS nt_username

,XEventData.XEvent.value('(action/value)[6]', 'VARCHAR(512)') AS username

,XEventData.XEvent.value('(action/value)[5]', 'VARCHAR(512)') AS sql_text

,XEventData.XEvent.value('(action/value)[4]', 'VARCHAR(512)') AS session_id

,XEventData.XEvent.value('(action/value)[1]', 'VARCHAR(512)') AS client_app_name

INTO #TempData

FROM Data d

CROSS APPLY TargetData.nodes('//RingBufferTarget/event') AS XEventData ( XEvent )

WHERE DATEADD(hour,-5,XEventData.XEvent.value('(@timestamp)[1]', 'datetime')) > DATEADD(MINUTE,-1*@How_Often,GETDATE())

SELECT @RowCount = COUNT(1) FROM #TempData

IF @RowCount > 0

BEGIN

PRINT CAST(@RowCount AS VARCHAR(10)) + ' Log Shrink records were found'

SET @SQL = '

| Server | Database Name | Time Stamp | Client Hostname | Username | SQL_Text |

|---|---|---|---|---|---|

| ' + @@SERVERNAME + ' | ' + @DBName + ' | ' + @TimeStamp + ' | ' + @HostName + ' | ' + @UserName + ' | ' + @SQL_Text + ' |

'

END

ELSE

PRINT 'No Shrinks in the time frame requested'

--PRINT @SQL

EXEC msdb.dbo.sp_send_dbmail

@recipients = '[Your Email Here]',

@body = @SQL,

@subject = 'Log Shrink Detected',

@profile_name = '[Your Profile Name Here]',

@body_format = 'html';

DROP TABLE #TempData

DECLARE @Delay VARCHAR(10)

--,@How_Often INT = 60

IF @How_Often < 60

SET @Delay = '00:' + RIGHT('00' + CAST(@How_Often AS VARCHAR(2)),2) + ':00'

ELSE

SET @Delay = '01:' + RIGHT('00' + CAST(@How_Often-60 AS VARCHAR(2)),2) + ':00'

WAITFOR DELAY @Delay

GOTO Start_Code

END

GO

Then the last step is to call this proc from a agent job. Iif you use the WaitFor logic, I'd still set a schedule to run every hour, just in case it fails...but the more I think about this, the more I prefer not using the WaitFor logic and just running the job every hour; but if the job fails, you won't get emailed.

--Eric

Labels:

Extended Events,

Log Shrinkage,

SQL,

SQL Server,

SQL Server 2008,

SQL Server 2008R2,

TSQL

Friday, April 13, 2012

SSRS Queries

I've recently been working with SSRS and needed to write some queries to get more knowledge about what was happening on the server.

One of the first things I wanted to know was what subscriptions had run that did not succeed. This query should be run on the server that hosts your ReportServer Database. I'm running these on SQL Server 2008R2

I noticed that I was seeing a number of subscriptions currently running for the same report, many run at the same time. I wrote a query to tell me which subscriptions had the same "report parameter values". This doesn't look at the subscription start time or days of the week, just the parameters...so you'll need to do some investigation (the next query will help with that)

So when you find a possible duplicate subscription in the previous list, get the Report_OID and Parameters field and past them into the variables in this query and execute to see if it needs more investigation. I usually look at the "LastRun" field and see if there are any duplicates in that list. If you need to dig in more, the query provides links to both the subscription webpage and the report management page listing all subscriptions

But wait, thats not all, some additional interesting queries:

See all currently running reports

See the run information for a specific report (comment out the RequestType if you want to see things other than subscriptions)

View a schedule for a specific Subscription

Ok, one last one, this lets you see all expired (past the end date) and one time subscriptions (useful if you want to clean them up)

If you have any other good queries, please drop them in a comment below

One of the first things I wanted to know was what subscriptions had run that did not succeed. This query should be run on the server that hosts your ReportServer Database. I'm running these on SQL Server 2008R2

USE [ReportServer]

--GET THE SERVER NAME

DECLARE @ServerURL VARCHAR(MAX)

SELECT TOP 1 @ServerURL =[MachineName]

FROM [dbo].Keys

WHERE [MachineName] IS NOT NULL

IF CHARINDEX('\',@ServerURL) > 0

SET @ServerURL = LEFT(@ServerURL,CHARINDEX('\',@ServerURL)-1)

SET @ServerURL = 'http://' + @ServerURL + '/Reports/Pages'

--FIND THE NON-SUCCESSFUL SUBSCRIPTIONS

;WITH AgentJobsCTE AS (

SELECT [SJ].job_id

,[SJ].name AS AgentJobName

,[SJS].[command]

,CAST(REPLACE(REPLACE(REPLACE([SJS].[command],'exec [ReportServer].dbo.AddEvent @EventType=''TimedSubscription'', @EventData=''',''),'exec ReportServer.dbo.AddEvent @EventType=''TimedSubscription'', @EventData=''',''), '''', '') AS SYSNAME) AS SubscriptionID

FROM msdb.[dbo].[sysjobs] AS SJ

JOIN msdb.[dbo].[sysjobsteps] AS SJS ON [SJ].[job_id] = [SJS].[job_id]

WHERE SJS.[command] LIKE '%TimedSubscription%'

)

SELECT O.[UserName] AS OwnerName,M.[UserName] AS ModifiedBy,C.[Name] AS ReportName,[AJ].AgentJobName,S.[SubscriptionID],S.[LastStatus],S.[LastRunTime],S.[ModifiedDate],S.[Report_OID]

,@ServerURL + '/SubscriptionProperties.aspx?ItemPath=' + REPLACE(REPLACE(C.Path,'/','%2f'),' ','+') + '&IsDataDriven=False&SubscriptionID=' + CAST(S.[SubscriptionID] AS VARCHAR(MAX)) AS SubscriptionURL

,@ServerURL + '/Report.aspx?ItemPath=' + REPLACE(REPLACE(C.Path,'/','%2f'),' ','+') + '&SelectedTabId=PropertiesTab&SelectedSubTabId=SubscriptionsTab&SortBy=LastExecuted&IsAscending=false' AS ReportURL

FROM [ReportServer].[dbo].[Subscriptions] AS S

JOIN [ReportServer].[dbo].[Users] AS O ON [S].[OwnerID] = [O].[UserID]

JOIN [ReportServer].[dbo].[Users] AS M ON S.[ModifiedByID] = [M].[UserID]

JOIN [ReportServer].[dbo].[Catalog] AS C ON [S].[Report_OID] = [C].[ItemID]

LEFT JOIN [AgentJobsCTE] AJ ON CAST(S.[SubscriptionID] AS SYSNAME) = AJ.[SubscriptionID]

WHERE [LastRunTime] >= '04/02/2012 15:00' --NOT NEEDED, BUT MAKES YOUR LIST SHORTER

AND [LastStatus] NOT LIKE 'Mail sent to%'

AND [LastStatus] NOT LIKE 'The file "%'

--AND [LastStatus] NOT LIKE 'Pending%' --Pending are currently running.

ORDER BY [LastRunTime] DESC

I noticed that I was seeing a number of subscriptions currently running for the same report, many run at the same time. I wrote a query to tell me which subscriptions had the same "report parameter values". This doesn't look at the subscription start time or days of the week, just the parameters...so you'll need to do some investigation (the next query will help with that)

USE [ReportServer]

DECLARE @ServerURL VARCHAR(MAX)

SELECT TOP 1 @ServerURL =[MachineName]

FROM [dbo].Keys

WHERE [MachineName] IS NOT NULL

IF CHARINDEX('\',@ServerURL) > 0

SET @ServerURL = LEFT(@ServerURL,CHARINDEX('\',@ServerURL)-1)

SET @ServerURL = 'http://' + @ServerURL + '/Reports/Pages'

;WITH DuplicateSubscriptions AS (

SELECT C.Name AS ReportName

,CAST(Parameters AS VARCHAR(MAX)) AS Parameters

,[Report_OID]

,C.Path

,COUNT(1) AS [Count]

FROM [ReportServer].[dbo].[Subscriptions] S

JOIN [ReportServer].[dbo].[Catalog] AS C ON [S].[Report_OID] = [C].[ItemID]

LEFT JOIN [dbo].[ReportSchedule] AS RS ON [S].[SubscriptionID] = [RS].[SubscriptionID]

LEFT JOIN [dbo].[Schedule] AS SC ON RS.[ScheduleID] = SC.[ScheduleID]

WHERE (SC.EndDate IS NULL OR (SC.EndDate IS NULL AND SC.[RecurrenceType] <> 1))

GROUP BY CAST(Parameters AS VARCHAR(MAX)),[Report_OID],C.Name,C.Path

HAVING COUNT(1) > 1

)

SELECT *

,@ServerURL + '/Report.aspx?ItemPath=' + REPLACE(REPLACE(Path,'/','%2f'),' ','+') + '&SelectedTabId=PropertiesTab&SelectedSubTabId=SubscriptionsTab&SortBy=LastExecuted&IsAscending=false' AS URL

FROM [DuplicateSubscriptions]

--ORDER BY [ReportName], [Count] DESC

ORDER BY [Count] DESC, [ReportName]

So when you find a possible duplicate subscription in the previous list, get the Report_OID and Parameters field and past them into the variables in this query and execute to see if it needs more investigation. I usually look at the "LastRun" field and see if there are any duplicates in that list. If you need to dig in more, the query provides links to both the subscription webpage and the report management page listing all subscriptions

USE [ReportServer]

-- VIEW ALL (NON EXPIRED) SUBSCRIPTIONS FOR A SPECIFIC REPORT WITH SPECIFIC PARAMETERS

DECLARE @ServerSubscriptionURL VARCHAR(MAX)

SELECT TOP 1 @ServerSubscriptionURL =[MachineName]

FROM [dbo].Keys

WHERE [MachineName] IS NOT NULL

IF CHARINDEX('\',@ServerSubscriptionURL) > 0

SET @ServerSubscriptionURL = LEFT(@ServerSubscriptionURL,CHARINDEX('\',@ServerSubscriptionURL)-1)

SET @ServerSubscriptionURL = 'http://' + @ServerSubscriptionURL + '/Reports/Pages'

DECLARE @ReportID sysname = 'F9999999-888G-7H77-I9I9-000000J00000' -- ReportID from previous query

,@ParameterList VARCHAR(MAX) = '[parameters]'-- Parameters from previous query

SELECT C.Name AS ReportName

,S.[SubscriptionID]

,S.[Description] Descr

,S.[LastRunTime] LastRun

,S.[LastStatus]

--,SC.[EndDate]

--,SC.[RecurrenceType]

,U.[UserName] AS Owner

,@ServerSubscriptionURL + '/SubscriptionProperties.aspx?ItemPath=' + REPLACE(REPLACE(C.Path,'/','%2f'),' ','+') + '&IsDataDriven=False&SubscriptionID=' + CAST(S.[SubscriptionID] AS VARCHAR(MAX)) AS SubscriptionURL

,@ServerSubscriptionURL + '/Report.aspx?ItemPath=' + REPLACE(REPLACE(C.Path,'/','%2f'),' ','+') + '&SelectedTabId=PropertiesTab&SelectedSubTabId=SubscriptionsTab&SortBy=LastExecuted&IsAscending=false' AS ReportURL

FROM [ReportServer].[dbo].[Subscriptions] S

JOIN [ReportServer].[dbo].[Users] AS U ON [S].[OwnerID] = [U].[UserID]

JOIN [ReportServer].[dbo].[Catalog] AS C ON [S].[Report_OID] = [C].[ItemID]

LEFT JOIN [dbo].[ReportSchedule] AS RS ON [S].[SubscriptionID] = [RS].[SubscriptionID]

LEFT JOIN [dbo].[Schedule] AS SC ON RS.[ScheduleID] = SC.[ScheduleID]

WHERE Report_OID = @ReportID

AND CAST(S.Parameters AS VARCHAR(MAX)) = @ParameterList

AND (SC.EndDate IS NULL OR (SC.EndDate IS NULL AND SC.[RecurrenceType] <> 1))

ORDER BY S.Description

But wait, thats not all, some additional interesting queries:

See all currently running reports

SELECT [JobID],[StartDate],[ComputerName],[RequestName],[RequestPath]

,[Description],[Timeout] AS Timeout, DATEDIFF(ss, startdate, GETDATE()) AS SecondsSinceRun

, DATEDIFF(mi, startdate, GETDATE()) AS MinutesSinceRun

,[JobAction],[JobType],[JobStatus]

,'http://[reporturl]/Reports/Pages/Report.aspx?ItemPath=' + REPLACE(REPLACE(RJ.RequestPath,'/','%2f'),' ','+') + '&SelectedTabId=PropertiesTab&SelectedSubTabId=SubscriptionsTab&SortBy=LastExecuted&IsAscending=false' AS ReportURL

FROM [ReportServer].[dbo].[RunningJobs] AS RJ

See the run information for a specific report (comment out the RequestType if you want to see things other than subscriptions)

SELECT *

FROM [ReportServer].[dbo].[ExecutionLog2] AS EL

WHERE [ReportPath] LIKE '%[report name=""]' AND [RequestType] = 'Subscription'

ORDER BY [TimeStart] DESC

View a schedule for a specific Subscription

-- VIEW SCHDULE DATA FOR A SPEICIFC SUBSCRIPTION

SELECT *

FROM [dbo].[Schedule] AS S

JOIN [dbo].[ReportSchedule] AS RS ON [S].[ScheduleID] = [RS].[ScheduleID]

WHERE RS.[SubscriptionID] = '[subscriptionid]'

Ok, one last one, this lets you see all expired (past the end date) and one time subscriptions (useful if you want to clean them up)

-- EXPIRED AND ONE TIME RUN SUBSCRIPTIONS

DECLARE @ServerURL VARCHAR(MAX)

SELECT TOP 1 @ServerURL =[MachineName]

FROM [dbo].Keys

WHERE [MachineName] IS NOT NULL

IF CHARINDEX('\',@ServerURL) > 0

SET @ServerURL = LEFT(@ServerURL,CHARINDEX('\',@ServerURL)-1)

SET @ServerURL = 'http://' + @ServerURL + '/Reports/Pages'

;WITH ExpiredSubscriptions AS

(

SELECT RS.[SubscriptionID], S.[EndDate]

FROM [dbo].[Schedule] AS S

JOIN [dbo].[ReportSchedule] AS RS ON [S].[ScheduleID] = [RS].[ScheduleID]

WHERE S.[EndDate] < GETDATE() OR S.[RecurrenceType] = 1

)

SELECT S.[SubscriptionID]

,C.Name AS ReportName

,S.[Description] Descr

,S.[LastRunTime] LastRun

,ES.[EndDate]

,S.[LastStatus]

,U.[UserName] AS Owner

,@ServerURL + '/SubscriptionProperties.aspx?ItemPath=' + REPLACE(REPLACE(Path,'/','%2f'),' ','+') + '&IsDataDriven=False&SubscriptionID=' + CAST(S.[SubscriptionID] AS VARCHAR(MAX)) AS SubscriptionURL

,@ServerURL + '/Report.aspx?ItemPath=' + REPLACE(REPLACE(Path,'/','%2f'),' ','+') + '&SelectedTabId=PropertiesTab&SelectedSubTabId=SubscriptionsTab&SortBy=LastExecuted&IsAscending=false' AS ReportURL

FROM Subscriptions S

JOIN [dbo].[Users] AS U ON [S].[OwnerID] = [U].[UserID]

JOIN [ReportServer].[dbo].[Catalog] AS C ON [S].[Report_OID] = [C].[ItemID]

JOIN [ExpiredSubscriptions] ES ON S.[SubscriptionID] = ES.[SubscriptionID]

ORDER BY C.[Name], ES.[EndDate] DESC

If you have any other good queries, please drop them in a comment below

Labels:

DBA,

R2,

SQL Server,

SQL Server 2008,

SQL Server 2008R2,

SSRS,

TSQL

Saturday, November 12, 2011

SQLSaturday #99 Scripts

Hello to everyone who finds my blog because of taking my SQLSaturday presentation on Execution Plans. As promised, I'm attaching my scripts.

What are Execution Plans?

The definition I used in my presentation was:

"In simplest terms, Execution Plans are the steps that SQL Server takes in order to return the requested information."

How do I see Execution Plans

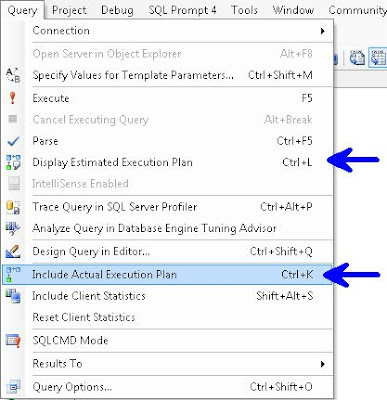

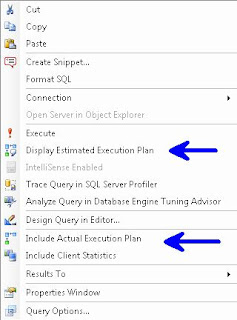

For Graphical Execution Plans there are a few ways:

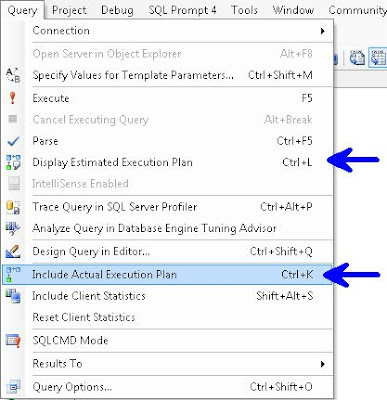

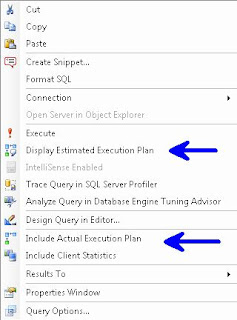

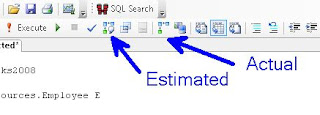

In the Menu Bar choose Query and you can choose either Display Estimated Execution Plan or Include Actual Execution Plan.

Right Click Query Window

Control L (Estimated) / M (Actual)

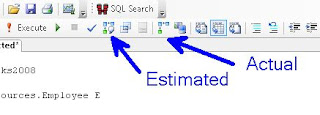

Or the simplest way, use the two buttons

Plan Type

Graphical, Text, XML

Gets more difficult to read as you go from left to right, but also get more info. i.e. Graphical is easier to read than is Text, which is easier to read than XML, but XML gives more info than Text, which in turn gives more info than Graphical.

Note: I've read that Microsoft is planning on deprecating Text Execution Plan

Permission

sysadmin, dbcreator or db_owner, or be granted the ShowPlan permission:

GRANT SHOWPLAN TO [username]

Reuse

The Optimizer stores plans so it can reuse them, this is removed on server restart or DBCC FREEPROCCACHE

The examples below use AdventureWorks and AdventureWorks2008, available at CodePlex

To start, please turn on Include Actual Execution Plan from any of the above described methods. Next run this query:

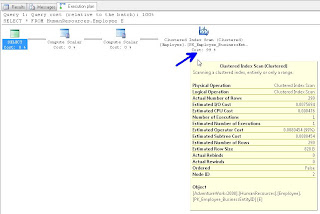

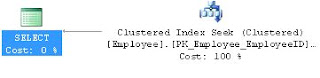

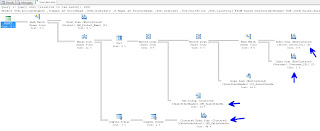

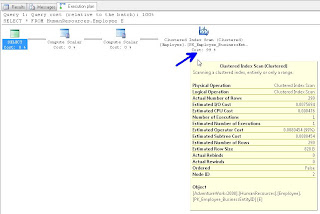

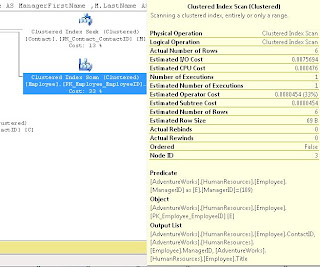

In your results window, you will see a new tab called "Execution Plan", if you click on that, you should see the following. Hovering over the left most object will display the tool tip in this screenshot:

If you right click on any of the objects in this window you can choose Properties and get additional info. I traditionally read from right to left, but you can get important info like "Optimization Level" if you get the properties from the left most (select) object. The most common Optimization Levels are Trivial or Full. This is a trivial plan because there are no where clauses or joins, so the Optimizer can only get this data one way, doing a full table scan.

We discussed the difference between A Scan and a Seek. A Scan means that SQL Server needed to look at every record in the table, where as a Seek means that it can use an index to go directly to the record in question. We likened this to a phone book, if you are looking for the phone number and address for "Sam Smith", a Scan means you have to look at every page and every record even after it already finds a record for Sam Smith, whereas a Seek can go right to the S's at the top of the page and quickly go to the Sam Smith record and stop looking.

We discussed how typically, a scan performs worse than a seek. However if the table is small, and in some other instances, a Scan can be the best option.

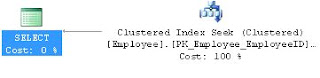

Next we ran the following query:

And we saw an Clustered Index Seek this time.

Our next query:

Showed us the "Missing Index" at the top of the Execution Plan

You can right click on this "Missing Index" text and "Missing Index Details" to have it open the suggested index in a new window.

We altered the index slightly (gave it a name and the WITH(Data_Compression=ROW) hint) and ran this:

We then re-ran Query 3 and saw that we now have an Index Seek.

For Query 4 we ran:

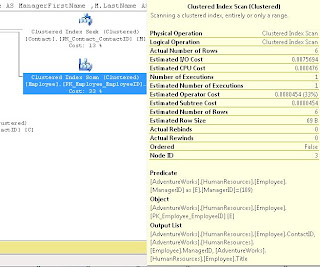

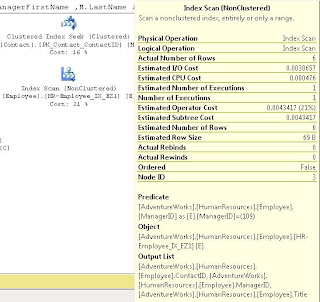

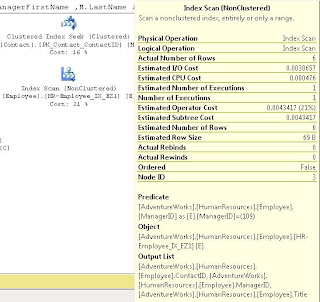

Which showed us a Clustered Index Scan on HumanResources.Employee

It didn't state a missing index so we discussed how to come up with our own index.

We started by noticing that ContactID and ManagerID were both being Joined, and that ManagerID was in the WHERE clause, so we created an index on that, also we used INCLUDE (Title) because Title was in the select List:

We did discuss some of the WITH parts, like the use of ONLINE = ON as a hint to try to make this index without causing any locking if possible.

After we ran this, we noted that the Index Scan still existed; we discussed that because the ContactID wasn't part of the WHERE, it should go in the INCLUDE. After making this change and re-running the index we saw it change to a Index Seek (note I'm using the DROP_EXISTING = ON here rather than the DROP statement I used in class).

We noted that the when we hover over the Employee object in the above screen shots, that it the Estimated I/O Cost dropped from 0.0075 to 0.00386 after we converted from a SCAN to a SEEK and the Estimated Operator Cost changed from .008 to .004 We acknowledged that these numbers seem small, but if this table explodes and becomes very large, we would expect to see these go up and seeing the costs cut in half is a good thing.

For Query 5 we let the audience try to determine what was wrong and needed to be changed:

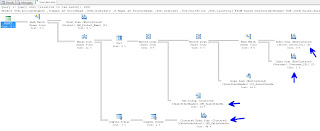

We had a big Execution Plan, we discussed that you can click on the [+] symbol at the bottom left of the window to help scroll around:

We all agreed that the Index Scan's had to go. We also introduced the "Key Lookup" object. I discussed how Key Lookups are not good, and indicate that the Optimizer couldn't get the key from the index. After looking at the query, we decided to add a number of indexes:

This did seem to help, when we compared the Estimated I/O's and CPU's but we still were seeing SCANS.

We scratched our heads and then realized that we didn't have a WHERE clause, so we were pulling every record possible. We added:

WHERE SOH.SalesOrderID = 22

and our Execution Plan looked much, much better.

By this time, I think you can figure out what needs to be done for Query 6:

Next I showed this query which returns all the Cached plans that SQL Server has ready to go:

Then we moved on to looking at Execution Plans returned as Text (Note remember to turn off "Include Actual Execution Plan"):

We saw a bunch of data returned

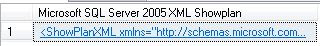

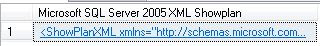

We next moved on to XML Plans:

If you click on the returned XML it will open in Graphical Format. If you really want to see it in XML, when viewing a plan in Graphical Format, right click on a blank part of the screen and choose "Show Execution Plan XML"

I had two more pretty awesome queries to show you...the first was one that finds all "Missing Indexes" for all Databases.

And a query that can show you indexes which potentially are no longer used:

Remember that the numbers returned are since the last restart or DBCC FREEPROCCACHE so if you did that recently, don't trust these too much. Look at user_seeks, user_scans and user_lookups to see how often users have called this index. As always, do your research, this is a tool to help you identify probable indexes which might be unused.

I want to really thank Grant Fritchey, who literally wrote the book on Execution Plans, check out his stuff, he knows way more than I do about it.

http://www.simple-talk.com/author/grant-fritchey/

http://www.simple-talk.com/sql/performance/execution-plan-basics/

http://Scarydba.com/Resources

Lastly, if you are not already a member of PASS or PASSMN, please signup, it's free and we have monthly meetings where we discuss stuff and show code and talk about new stuff...it's a lot of fun. Also, PASS does something called 24 hours of pass, where they do 24 hourly webinars (over 2 days), for free!!! so sign up and check them out.

Please subscribe to my blog and follow me on Twitter (@EricZierdt).

Comment, or email me any questions.

What are Execution Plans?

The definition I used in my presentation was:

"In simplest terms, Execution Plans are the steps that SQL Server takes in order to return the requested information."

How do I see Execution Plans

For Graphical Execution Plans there are a few ways:

In the Menu Bar choose Query and you can choose either Display Estimated Execution Plan or Include Actual Execution Plan.

Right Click Query Window

Control L (Estimated) / M (Actual)

Or the simplest way, use the two buttons

Plan Type

Graphical, Text, XML

Gets more difficult to read as you go from left to right, but also get more info. i.e. Graphical is easier to read than is Text, which is easier to read than XML, but XML gives more info than Text, which in turn gives more info than Graphical.

Note: I've read that Microsoft is planning on deprecating Text Execution Plan

Permission

sysadmin, dbcreator or db_owner, or be granted the ShowPlan permission:

GRANT SHOWPLAN TO [username]

Reuse

The Optimizer stores plans so it can reuse them, this is removed on server restart or DBCC FREEPROCCACHE

The examples below use AdventureWorks and AdventureWorks2008, available at CodePlex

To start, please turn on Include Actual Execution Plan from any of the above described methods. Next run this query:

/* QUERY 1 */

USE AdventureWorks2008

SELECT *

FROM HumanResources.Employee E

In your results window, you will see a new tab called "Execution Plan", if you click on that, you should see the following. Hovering over the left most object will display the tool tip in this screenshot:

If you right click on any of the objects in this window you can choose Properties and get additional info. I traditionally read from right to left, but you can get important info like "Optimization Level" if you get the properties from the left most (select) object. The most common Optimization Levels are Trivial or Full. This is a trivial plan because there are no where clauses or joins, so the Optimizer can only get this data one way, doing a full table scan.

We discussed the difference between A Scan and a Seek. A Scan means that SQL Server needed to look at every record in the table, where as a Seek means that it can use an index to go directly to the record in question. We likened this to a phone book, if you are looking for the phone number and address for "Sam Smith", a Scan means you have to look at every page and every record even after it already finds a record for Sam Smith, whereas a Seek can go right to the S's at the top of the page and quickly go to the Sam Smith record and stop looking.

We discussed how typically, a scan performs worse than a seek. However if the table is small, and in some other instances, a Scan can be the best option.

Next we ran the following query:

/* QUERY 2 */

USE AdventureWorks

SELECT E.Title

FROM HumanResources.Employee E

WHERE E.EmployeeID = 185

And we saw an Clustered Index Seek this time.

Our next query:

/* QUERY 3 */

USE AdventureWorks

SELECT MiddleName

FROM Person.Contact

WHERE Phone LIKE '11%'

Showed us the "Missing Index" at the top of the Execution Plan

You can right click on this "Missing Index" text and "Missing Index Details" to have it open the suggested index in a new window.

We altered the index slightly (gave it a name and the WITH(Data_Compression=ROW) hint) and ran this:

USE [AdventureWorks]

GO

CREATE NONCLUSTERED INDEX [Contact_IX_Phone] ON [Person].[Contact]

(

[Phone]

)

INCLUDE

(

[MiddleName]

)

WITH(Data_Compression=ROW)

GO

We then re-ran Query 3 and saw that we now have an Index Seek.

For Query 4 we ran:

/* QUERY 4 */

USE AdventureWorks

SELECT E.Title

,C.FirstName

,C.LastName

,M.FirstName AS ManagerFirstName

,M.LastName AS ManagerLastName

,E.ManagerID

FROM HumanResources.Employee E

JOIN Person.Contact C ON E.ContactID = C.ContactID

JOIN Person.Contact M ON E.ManagerID = M.ContactID

WHERE E.ManagerID = 109

AND EXISTS (

SELECT 1

FROM Person.Contact AS C2

WHERE E.ContactID = C2.ContactID

)

Which showed us a Clustered Index Scan on HumanResources.Employee

It didn't state a missing index so we discussed how to come up with our own index.

We started by noticing that ContactID and ManagerID were both being Joined, and that ManagerID was in the WHERE clause, so we created an index on that, also we used INCLUDE (Title) because Title was in the select List:

USE [AdventureWorks]

GO

CREATE NONCLUSTERED INDEX [HR-Employee_IX_EZ1] ON [HumanResources].[Employee]

(

[ContactID] ASC, [ManagerID] ASC

)

INCLUDE ( [Title])

WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = ON, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION=ROW) ON [PRIMARY]

GO

We did discuss some of the WITH parts, like the use of ONLINE = ON as a hint to try to make this index without causing any locking if possible.

After we ran this, we noted that the Index Scan still existed; we discussed that because the ContactID wasn't part of the WHERE, it should go in the INCLUDE. After making this change and re-running the index we saw it change to a Index Seek (note I'm using the DROP_EXISTING = ON here rather than the DROP statement I used in class).

USE [AdventureWorks]

GO

CREATE NONCLUSTERED INDEX [HR-Employee_IX_EZ1] ON [HumanResources].[Employee]

(

[ManagerID] ASC

)

INCLUDE ( [ContactID], [Title])

WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = ON, ONLINE = ON, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION=ROW) ON [PRIMARY]

GO

We noted that the when we hover over the Employee object in the above screen shots, that it the Estimated I/O Cost dropped from 0.0075 to 0.00386 after we converted from a SCAN to a SEEK and the Estimated Operator Cost changed from .008 to .004 We acknowledged that these numbers seem small, but if this table explodes and becomes very large, we would expect to see these go up and seeing the costs cut in half is a good thing.

For Query 5 we let the audience try to determine what was wrong and needed to be changed:

/* QUERY 5 */

USE AdventureWorks2008

SELECT SOH.AccountNumber

,S.Name AS StoreName

,SOH.OrderDate

,P.Name AS ProductName

,SOD.OrderQty

,SOD.UnitPrice

,SOD.LineTotal

FROM Sales.SalesOrderHeader SOH

JOIN Sales.SalesOrderDetail SOD ON SOH.SalesOrderID = SOD.SalesOrderID

JOIN Sales.Customer C ON SOH.CustomerID = C.CustomerID

JOIN Sales.Store S ON C.StoreID = S.BusinessEntityID

JOIN Production.Product P ON SOD.ProductID = P.ProductID

We had a big Execution Plan, we discussed that you can click on the [+] symbol at the bottom left of the window to help scroll around:

We all agreed that the Index Scan's had to go. We also introduced the "Key Lookup" object. I discussed how Key Lookups are not good, and indicate that the Optimizer couldn't get the key from the index. After looking at the query, we decided to add a number of indexes:

USE [AdventureWorks2008]

GO

CREATE NONCLUSTERED INDEX [SalesOrderHeader_I01] ON [Sales].[SalesOrderHeader]

(

[SalesOrderID] ASC,

[CustomerID] ASC

)

INCLUDE

(

AccountNumber

,OrderDate

)

WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF

, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION = ROW) ON [PRIMARY]

GO

USE [AdventureWorks2008]

GO

/****** Object: Index [AK_SalesOrderDetail_rowguid] Script Date: 09/23/2011 14:35:47 ******/

CREATE NONCLUSTERED INDEX [SalesOrderDetail_I01] ON [Sales].[SalesOrderDetail]

(

SalesOrderID ASC

)

INCLUDE

(

ProductID

, OrderQty

,UnitPrice

,LineTotal

)

WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF

, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION = ROW) ON [PRIMARY]

GO

USE [AdventureWorks2008]

GO

CREATE NONCLUSTERED INDEX [Customer_I01] ON [Sales].[Customer]

(

[CustomerID] ASC)

INCLUDE (

[StoreID]

)WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF

, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION = ROW) ON [PRIMARY]

GO

USE [AdventureWorks2008]

GO

CREATE NONCLUSTERED INDEX [Store_I01] ON [Sales].[Store]

(

[BusinessEntityID] ASC

)

INCLUDE

(

Name

)

WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF

, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, DATA_COMPRESSION = ROW) ON [PRIMARY]

GO

This did seem to help, when we compared the Estimated I/O's and CPU's but we still were seeing SCANS.

We scratched our heads and then realized that we didn't have a WHERE clause, so we were pulling every record possible. We added:

WHERE SOH.SalesOrderID = 22

and our Execution Plan looked much, much better.

By this time, I think you can figure out what needs to be done for Query 6:

/* QUERY 6 */

SELECT LineTotal, OrderQty

FROM Sales.SalesOrderDetail s

JOIN Production.Product p ON s.ProductID = p.ProductID

WHERE CarrierTrackingNumber = '4911-403C-98'

Next I showed this query which returns all the Cached plans that SQL Server has ready to go:

SELECT [cp].[refcounts]

, [cp].[usecounts]

, [cp].[objtype]

, [st].[dbid]

, [st].[objectid]

, [st].[text]

, [qp].[query_plan]

FROM sys.dm_exec_cached_plans cp

CROSS APPLY sys.dm_exec_sql_text ( cp.plan_handle ) st

CROSS APPLY sys.dm_exec_query_plan ( cp.plan_handle ) qp ;

Then we moved on to looking at Execution Plans returned as Text (Note remember to turn off "Include Actual Execution Plan"):

/* TEXT PLANS */

SET SHOWPLAN_ALL ON; --TURNS ON Estimated Exececution Plan

USE AdventureWorks2008

SELECT *

FROM HumanResources.Employee E

SET SHOWPLAN_ALL OFF; --TURNS OFF Estimated Exececution Plan

SET STATISTICS PROFILE ON --TURNS ON Actual Exececution Plan

USE AdventureWorks2008

SELECT *

FROM HumanResources.Employee E

SET STATISTICS PROFILE OFF --TURNS OFF Actual Exececution Plan

We saw a bunch of data returned

We next moved on to XML Plans:

/* XML PLANS */

SET SHOWPLAN_XML ON; --TURNS ON Estimated Exececution Plan

USE AdventureWorks2008

SELECT *

FROM HumanResources.Employee E

SET SHOWPLAN_XML OFF; --TURNS OFF Estimated Exececution Plan

SET STATISTICS XML ON --TURNS ON Actual Exececution Plan

USE [AdventureWorks]

SELECT E.Title

,C.FirstName

,C.LastName

FROM HumanResources.Employee E

JOIN Person.Contact C ON E.ContactID = C.ContactID

WHERE E.ManagerID = 185

SET STATISTICS XML OFF --TURNS OFF Actual Exececution Plan

If you click on the returned XML it will open in Graphical Format. If you really want to see it in XML, when viewing a plan in Graphical Format, right click on a blank part of the screen and choose "Show Execution Plan XML"

I had two more pretty awesome queries to show you...the first was one that finds all "Missing Indexes" for all Databases.

SELECT TOP 10 SUBSTRING(mid.statement, 2, (CHARINDEX(']',mid.statement,1)-2)) AS DBName

,mid.statement

,migs.avg_total_user_cost

,migs.avg_user_impact

,migs.user_seeks

,migs.avg_total_user_cost * (migs.avg_user_impact / 100.0)

* (migs.user_seeks + migs.user_scans) AS improvement_measure

,'CREATE INDEX [missing_index_'

+ CONVERT (VARCHAR, mig.index_group_handle) + '_'

+ CONVERT (VARCHAR, mid.index_handle) + '_'

+ LEFT(PARSENAME(mid.statement, 1), 32) + ']' + ' ON ' + mid.statement

+ ' (' + ISNULL(mid.equality_columns, '')

+ CASE WHEN mid.equality_columns IS NOT NULL

AND mid.inequality_columns IS NOT NULL THEN ','

ELSE ''

END + ISNULL(mid.inequality_columns, '') + ')' + ISNULL(' INCLUDE ('

+ mid.included_columns

+ ')', '') AS create_index_statement

,migs.*

,mid.database_id

,mid.[object_id]

FROM sys.dm_db_missing_index_groups mig

INNER JOIN sys.dm_db_missing_index_group_stats migs ON migs.group_handle = mig.index_group_handle

INNER JOIN sys.dm_db_missing_index_details mid ON mig.index_handle = mid.index_handle

WHERE migs.avg_total_user_cost * (migs.avg_user_impact / 100.0)

* (migs.user_seeks + migs.user_scans) > 1

ORDER BY migs.avg_total_user_cost * migs.avg_user_impact * (migs.user_seeks + migs.user_scans) DESC

And a query that can show you indexes which potentially are no longer used:

IF EXISTS (SELECT * FROM tempdb.dbo.sysobjects WHERE ID = OBJECT_ID(N'tempdb..#Temp_UnUsed_Indexes'))

BEGIN

DROP TABLE #Temp_UnUsed_Indexes

END

CREATE TABLE #Temp_UnUsed_Indexes

(

[Server] VARCHAR(100)

, DatabaseName VARCHAR(100)

, TableName VARCHAR(100)

, IndexName VARCHAR(100)

, is_primary_key BIT

, user_seeks INT

, user_scans INT

, user_lookups INT

, user_updates INT

, last_user_seek DATETIME

, last_user_scan DATETIME

, last_user_lookup DATETIME

, last_user_update DATETIME

, system_seeks INT

, system_scans INT

, system_lookups INT

, system_updates INT

, last_system_seek DATETIME

, last_system_scan DATETIME

, last_system_lookup DATETIME

, last_system_update DATETIME

)

SET NOCOUNT ON

DECLARE @Seeks INT = 30

,@Scans INT = 30

,@Lookups INT = 30

,@DBName VARCHAR(MAX)

,@SQL VARCHAR(MAX)

DECLARE ZCursor CURSOR FOR

SELECT NAME --select *

FROM sys.databases

WHERE owner_sid <> 0x01

AND is_read_only = 0

AND user_access = 0

ORDER BY name

OPEN ZCursor

FETCH NEXT FROM ZCursor INTO @DBName

WHILE @@FETCH_STATUS = 0

BEGIN

SET @SQL = '

USE [' + @DBName + ']

DECLARE @Seeks INT = ' + CAST(@Seeks AS VARCHAR(20)) + '

,@Scans INT = ' + CAST(@Scans AS VARCHAR(20)) + '

,@Lookups INT = ' + CAST(@Lookups AS VARCHAR(20)) + '

INSERT INTO #Temp_UnUsed_Indexes

SELECT @@SERVERNAME AS SERVER

, DB_NAME(DDIUS.database_id) AS DatabaseName

, OBJECT_NAME(DDIUS.object_id) AS TableName

, I.name AS IndexName

, I.is_primary_key AS is_primary_key

, DDIUS.user_seeks AS user_seeks

, DDIUS.user_scans AS user_scans

, DDIUS.user_lookups AS user_lookups

, DDIUS.user_updates AS user_updates

, DDIUS.last_user_seek AS last_user_seek

, DDIUS.last_user_scan AS last_user_scan

, DDIUS.last_user_lookup AS last_user_lookup

, DDIUS.last_user_update AS last_user_update

, DDIUS.system_seeks AS system_seeks

, DDIUS.system_scans AS system_scans

, DDIUS.system_lookups AS system_lookups

, DDIUS.system_updates AS system_updates

, DDIUS.last_system_seek AS last_system_seek

, DDIUS.last_system_scan AS last_system_scan

, DDIUS.last_system_lookup AS last_system_lookup

, DDIUS.last_system_update AS last_system_update

FROM sys.dm_db_index_usage_stats AS DDIUS

JOIN sys.indexes AS I ON DDIUS.index_id = I.index_id AND DDIUS.object_id = I.object_id

WHERE database_id = DB_ID()

AND user_seeks <= @Seeks AND user_scans <= @Scans AND user_lookups <= @Lookups

--AND is_primary_key = 0

ORDER BY OBJECT_NAME(DDIUS.object_id), I.name

'

EXEC(@SQL)

-- PRINT @SQL

FETCH NEXT FROM ZCursor INTO @DBName

END

CLOSE ZCursor

DEALLOCATE ZCursor

SELECT *

FROM #Temp_UnUsed_Indexes

WHERE is_primary_key = 0

--AND user_seeks = 0 AND user_scans = 0 AND user_lookups = 0

--AND system_seeks = 0 AND system_scans = 0 AND system_lookups = 0

--AND DatabaseName NOT IN ('MOBILE')

ORDER BY [SERVER]

,DatabaseName

,TableName

,is_primary_key DESC

,IndexName

Remember that the numbers returned are since the last restart or DBCC FREEPROCCACHE so if you did that recently, don't trust these too much. Look at user_seeks, user_scans and user_lookups to see how often users have called this index. As always, do your research, this is a tool to help you identify probable indexes which might be unused.

I want to really thank Grant Fritchey, who literally wrote the book on Execution Plans, check out his stuff, he knows way more than I do about it.

http://www.simple-talk.com/author/grant-fritchey/

http://www.simple-talk.com/sql/performance/execution-plan-basics/

http://Scarydba.com/Resources

Lastly, if you are not already a member of PASS or PASSMN, please signup, it's free and we have monthly meetings where we discuss stuff and show code and talk about new stuff...it's a lot of fun. Also, PASS does something called 24 hours of pass, where they do 24 hourly webinars (over 2 days), for free!!! so sign up and check them out.

Please subscribe to my blog and follow me on Twitter (@EricZierdt).

Comment, or email me any questions.

Labels:

Execution Plans,

SQL,

SQL Saturday,

SQL Saturday 99,

SQL Server,

SQL Server 2008,

SQLSaturday,

TSQL

Thursday, November 10, 2011

New SP_WHO2

A long time ago, I was given a stored proc called "SP_WHOM" which said it was created by "MFrank". SP_WHOM has been a staple in my collection of utilities I use to debug issues on my SQL Servers. I never paid it much mind, until recently, when the thought occurred to me that I knew how to get all of the provided data from SP_WHOM, in an easier way, and I could add some beneficial new fields in the process. So I set out to try to design my own SP_WHOM, so I took at look at the fields provided in the DMV sys.sysprocess All the fields I wanted were in there; so I decided to design my own and this is what I came up with:

Why are you doing a UNION you might ask? I'm doing it because I want to display only those SPIDS which are not set to "Sleeping" or "Background" status, however...if a SPID is blocked by another SPID, I'd like to display the blocking SPID as well. If I didn't do this and someone opens a transaction, updates records and doesn't close the transaction, their SPID's status will be Sleeping, so you will see that any spid trying to access the record(s) are blocked, but you won't know anything about the SPID doing the blocking.

I'd love feedback, is there something I should add to this to make it more useful? Please follow my blog and leave me some feedback.

P.s. because this is being placed in the master database and has the sp_ prefix you can call this from any database by just executing: "sp_whom" no need for exec or master.. or anything.

USE [master]

GO

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

/*******************************

** Created By: Eric Zierdt **

** ericzierdt@gmail.com **

** ericemployed.blogspot.com **

*******************************/

CREATE PROCEDURE [dbo].[sp_whom]

AS

SET NOCOUNT ON

IF EXISTS (SELECT * FROM tempdb.dbo.sysobjects WHERE ID = OBJECT_ID(N'tempdb..#RawData'))

BEGIN

DROP TABLE #RawData

END

SELECT SPID

,UPPER(status) AS Status

,LogiName

,HostName

,CASE blocked

WHEN 0 THEN ''

ELSE CAST(blocked AS VARCHAR(5))

END AS BlockedBy

,Open_Tran

,DB_NAME(S.dbid) AS DBName

,CMD AS Command

,Last_Batch

,DATEDIFF(mi,last_batch,GETDATE()) AS RunTimeMins

,DATEDIFF(ss,last_batch,GETDATE()) AS RunTimeSecs

,CPU AS CPU_Time

,Physical_IO AS DiskIO

,Program_Name

,ST.text AS SQL_Text

INTO #RawData

FROM sys.sysprocesses AS S

CROSS APPLY sys.dm_exec_sql_text(S.sql_handle) ST

SELECT *

FROM #RawData R

WHERE spid <> @@SPID

AND status NOT IN ( 'background', 'sleeping' )

UNION

SELECT *

FROM #RawData AS RD

WHERE SPID IN (

SELECT BlockedBy

FROM #RawData AS RD2

WHERE RD2.BlockedBy <> ''

)

ORDER BY SPID

DROP TABLE #RawData

GO

Why are you doing a UNION you might ask? I'm doing it because I want to display only those SPIDS which are not set to "Sleeping" or "Background" status, however...if a SPID is blocked by another SPID, I'd like to display the blocking SPID as well. If I didn't do this and someone opens a transaction, updates records and doesn't close the transaction, their SPID's status will be Sleeping, so you will see that any spid trying to access the record(s) are blocked, but you won't know anything about the SPID doing the blocking.

I'd love feedback, is there something I should add to this to make it more useful? Please follow my blog and leave me some feedback.

P.s. because this is being placed in the master database and has the sp_ prefix you can call this from any database by just executing: "sp_whom" no need for exec or master.. or anything.

Labels:

SP_WHO,

SP_WHO2,

SP_WHOM,

SQL,

SQL Server,

SQL Server 2008,

SQLSaturday,

TSQL

Friday, September 16, 2011

Deleting ZZ'd Objects

If you are like me, you have some method or process of checking that a given table/proc/etc. is safe to be dropped prior to dropping it. My current method is to do some checks, see how often stuff is being used, search code, then to rename the object ZZ_. I let the object exist for two weeks as ZZ_ before I finally script it out and drop it.

I decided to write a little script to search out the names of all my ZZ'd objects on a server (specifically User Tables, Procs and Agent Jobs) and store them in a temp table. Next I email the list to my development team offering them one last chance to save the object, and set about scripting it out. The script also creates a DROP script for you to run, so when you are ready, you can just run the script.

Works pretty well. Please comment below on how you facilitate removing old objects, is your method different from mine?

I decided to write a little script to search out the names of all my ZZ'd objects on a server (specifically User Tables, Procs and Agent Jobs) and store them in a temp table. Next I email the list to my development team offering them one last chance to save the object, and set about scripting it out. The script also creates a DROP script for you to run, so when you are ready, you can just run the script.

Works pretty well. Please comment below on how you facilitate removing old objects, is your method different from mine?

/*******************************

** Created By: Eric Zierdt **

** ericzierdt@gmail.com **

** ericemployed.blogspot.com **

*******************************/

IF object_id('tempdb..#ObjectList') IS NOT NULL

BEGIN

DROP TABLE #ObjectList

END

CREATE TABLE #ObjectList

(

DBName VARCHAR(100)

,ObjectName VARCHAR(100)

,ObjectType VARCHAR(2)

,ObjectType_Desc VARCHAR(100)

)

DECLARE @DBName AS VARCHAR(MAX)

,@SQL AS VARCHAR(MAX)

DECLARE ZCursor CURSOR FOR

SELECT name

FROM sys.databases

WHERE owner_sid <> '0x01'

ORDER BY name

OPEN ZCursor

FETCH NEXT FROM ZCursor

INTO @DBName

WHILE @@FETCH_STATUS = 0

BEGIN

PRINT 'Starting ' + @DBName

SET @SQL = 'USE [' + @DBName + ']

SELECT ''' + @DBName + ''' AS DBName, name,type,type_desc FROM sys.objects WHERE name LIKE ''%%zz%%'' ORDER BY CASE WHEN type = ''U'' THEN 1 ELSE 2 END, name

'

INSERT INTO #ObjectList

( DBName, ObjectName,ObjectType,ObjectType_Desc )

EXEC (@SQL)

FETCH NEXT FROM ZCursor

INTO @DBName

END

CLOSE ZCursor

DEALLOCATE ZCursor

USE msdb

INSERT INTO #ObjectList

( DBName, ObjectName,ObjectType,ObjectType_Desc )

SELECT 'msdb',name,'A','Agent Job'

FROM dbo.sysjobs

WHERE name LIKE '%%zz%'

-- SELECT * FROM #ObjectList

-- RUN THE CODE BELOW TO PRINT OUT THE DROP COMMANDS FOR THE OBJECTS

/*

DECLARE @DBName AS VARCHAR(MAX)

,@SQL AS VARCHAR(MAX)

,@ObjectName AS VARCHAR(MAX)

,@ObjectType AS VARCHAR(2)

,@Job_ID VARCHAR(257)

DECLARE DeleteCursor CURSOR FOR

SELECT DBName, ObjectName,ObjectType FROM #ObjectList --WHERE ObjectType = 'U'

OPEN DeleteCursor

FETCH NEXT FROM DeleteCursor

INTO @DBName,@ObjectName,@ObjectType

WHILE @@FETCH_STATUS = 0

BEGIN

IF @ObjectType = 'U'

BEGIN

SET @SQL = 'DROP TABLE [' + @DBName + '].[dbo].[' + @ObjectName + ']

GO'

END

ELSE IF @ObjectType = 'P'

BEGIN

SET @SQL = 'USE [' + @DBName + ']

DROP PROCEDURE [dbo].[' + @ObjectName + ']

GO'

END

ELSE IF @ObjectType = 'A'

BEGIN

SELECT @Job_ID = job_id FROM msdb.dbo.sysjobs_view WHERE name = N'' + @ObjectName + ''

SET @SQL = 'EXEC msdb.dbo.sp_delete_job @job_id=N''' + @Job_ID + ''', @delete_unused_schedule=1

GO'

END

ELSE

PRINT 'Unknown Object Type Found: [' + @ObjectType + ']'

PRINT @SQL

FETCH NEXT FROM DeleteCursor

INTO @DBName,@ObjectName,@ObjectType

END

CLOSE DeleteCursor

DEALLOCATE DeleteCursor

*/

Labels:

DROP TABLES,

SQL,

SQL Server,

SQL Server 2008,

TSQL,

ZZ'd Objects

Thursday, June 16, 2011

Restoring MSDB causes Log Shipping Failures

My situation is as follows, I have created a new Staging Server; we want everything to be as close as possible to our Production OLAP server, including the Job System...how best to do this?

I tired playing around with creating a linked server and copying stuff from msdb from primary to staging, but eventually I ran into problems with ID's and just couldn't make it work, so I decided to simply copy the msdb database from my OLAP server and restore it on my Staging server. Sounds simple right? Well, there were a number of problems that I ran into, specifically with regards to Log Shipping; with some help from Google and Twitter, I was able to resolve the issues and wanted to post the fixes to help any of you that run into the same issues.

FYI, I'm running SQL Server 2008 R2

First Error: Log Shipping jobs show a status of "Suspended" and I can't get them to do anything.

Fix: Thanks to http://www.sqlservercentral.com/Forums/Topic8379-7-1.aspx and user TVR, I found the answer; I needed to run:

And Restart the service.

Next problem: Log Shipping Copy's work fine, but Restores fail, saying "Error: Could not find a log backup file that could be applied to secondary database". This time help came from Twitter, where user @AngryPets a.k.a Michael K. Campbell pointed me in the direction of the msdb..log_shipping_monitor_secondary table. It took quite a bit of playing with it and the associated tables to figure out what needed to be reset/changed, but I was finally able to get it to work by doing this:

Simple enough, but took me forever to figure out.

Hope this helps, if so, drop me a comment.

I tired playing around with creating a linked server and copying stuff from msdb from primary to staging, but eventually I ran into problems with ID's and just couldn't make it work, so I decided to simply copy the msdb database from my OLAP server and restore it on my Staging server. Sounds simple right? Well, there were a number of problems that I ran into, specifically with regards to Log Shipping; with some help from Google and Twitter, I was able to resolve the issues and wanted to post the fixes to help any of you that run into the same issues.

FYI, I'm running SQL Server 2008 R2

First Error: Log Shipping jobs show a status of "Suspended" and I can't get them to do anything.

Fix: Thanks to http://www.sqlservercentral.com/Forums/Topic8379-7-1.aspx and user TVR, I found the answer; I needed to run:

use msdb

go

delete from msdb.dbo.syssubsystems

exec msdb.dbo.sp_verify_subsystems 1

go

And Restart the service.

Next problem: Log Shipping Copy's work fine, but Restores fail, saying "Error: Could not find a log backup file that could be applied to secondary database". This time help came from Twitter, where user @AngryPets a.k.a Michael K. Campbell pointed me in the direction of the msdb..log_shipping_monitor_secondary table. It took quite a bit of playing with it and the associated tables to figure out what needed to be reset/changed, but I was finally able to get it to work by doing this:

/*******************************

** Created By: Eric Zierdt **

** ericzierdt@gmail.com **

** ericemployed.blogspot.com **

*******************************/

UPDATE msdb.dbo.log_shipping_monitor_secondary

SET secondary_server = '[Staging_Server_Name]'

, last_restored_file = NULL -- Need to get rid of last restore data

WHERE secondary_server = '[Production_Server_Name]'

UPDATE msdb.dbo.log_shipping_secondary

SET monitor_server = '[Staging_Server_Name]'

WHERE monitor_server = '[Production_Server_Name]'

Simple enough, but took me forever to figure out.

Hope this helps, if so, drop me a comment.

Wednesday, June 8, 2011

Enable/Disable jobs

This post will probably not have mass appeal, but it's something I wrote recently that I find of value. The situation I was working with is that on our OLAP server, we have a number of "Invoke" agent jobs that fire off ever 2 minutes which go out and look to see if we need to process run our ETL's and process our cube. When we are doing releases or work on ETL's we want to disable all the Invoke jobs so they don't start. I'm sure you can think of other situations where this might be necessary outside of cube processing.

So one way to do this (arguably the faster way) is to open your job manager and highlight the invoke jobs and right-click and choose disable. But what if you have a few invokes disabled for one reason or another? You'll have to either remember which ones were disabled, or make a list, and make sure you don't re-enable those when you are done. Or, what if you want to wait for your current Invoke to end before you do the disable (not necessary, but what if), or you want to do it at a specific time of day?

I came up with a script that I put into two agent jobs to facilitate this. These can be scheduled and work pretty well.

The first job, creates a storage table, stores the id's for the enabled jobs, then disables them. (It checks to see if the job exists first and if so, error's out)

The next agent job checks to see if the storage table exists, and if so enables the disabled jobs, then deletes the storage table.

So one way to do this (arguably the faster way) is to open your job manager and highlight the invoke jobs and right-click and choose disable. But what if you have a few invokes disabled for one reason or another? You'll have to either remember which ones were disabled, or make a list, and make sure you don't re-enable those when you are done. Or, what if you want to wait for your current Invoke to end before you do the disable (not necessary, but what if), or you want to do it at a specific time of day?

I came up with a script that I put into two agent jobs to facilitate this. These can be scheduled and work pretty well.

The first job, creates a storage table, stores the id's for the enabled jobs, then disables them. (It checks to see if the job exists first and if so, error's out)

/*******************************

** Created By: Eric Zierdt **

** ericzierdt@gmail.com **

** ericemployed.blogspot.com **

*******************************/

IF OBJECT_ID('msdb.dbo.ActiveInvokeJobs') IS NULL

BEGIN

CREATE TABLE FRZ_ADMINDB.dbo.ActiveInvokeJobs (

job_id UNIQUEIDENTIFIER

, [Name] VARCHAR(255)

)

INSERT INTO msdb.dbo.ActiveInvokeJobs

( job_id, [Name])

SELECT job_id

,[Name]

FROM msdb.dbo.sysjobs S

WHERE enabled = 1

AND name LIKE '%invoke%'

UPDATE msdb.dbo.sysjobs

SET enabled = 0

WHERE job_id IN (

SELECT job_id

FROM msdb.dbo.ActiveInvokeJobs

)

END

ELSE

BEGIN

RAISERROR ('Storage Table Already Exists.',16,1);

END

The next agent job checks to see if the storage table exists, and if so enables the disabled jobs, then deletes the storage table.

/*******************************

** Created By: Eric Zierdt **

** ericzierdt@gmail.com **

** ericemployed.blogspot.com **

*******************************/

IF OBJECT_ID('msdb.dbo.ActiveInvokeJobs') IS NOT NULL

BEGIN

UPDATE msdb.dbo.sysjobs

SET enabled = 1

WHERE job_id IN (

SELECT job_id

FROM msdb.dbo.ActiveInvokeJobs

)

DROP TABLE msdb.dbo.ActiveInvokeJobs

END

ELSE

BEGIN

RAISERROR ('Storage Table Does Not Exist.',16,1);

END

Labels:

Agent Jobs.,

SQL Server,

SQL Server 2008,

TSQL

Thursday, April 21, 2011

Getting Object Counts

Recently I was reporting a bug I noticed with a 3rd party tool, the support group asked me to send them "the number of instances, number of databases per instance, total number of tables and total number of indexes." Now I knew the number of instances I was monitoring, and I knew the approximate number of tables (perhaps a good DBA should know this number, not sure...do other DBA's out there with larger servers know the exact number? comment below, I'm interested), but I had no idea how many indexes. So I wrote a little script to give me this number and as I like to do, I'm making it available to the community, hope this helps you...if you find use of it (even years in the future) post below.

/***********************************

** Author: Eric Zierdt **

** Date: 4/21/2011 **

** Email: eric.zierdt@gmail.com **

***********************************/

DECLARE @CountAll AS TABLE (CntName VARCHAR(100), Cnt INT)

/*******************************************

** GET COUNT OF ALL NON-SYSTEM DATABASES **

*******************************************/

INSERT INTO @CountAll

SELECT 'TotalDBs',COUNT(1) AS TotalDBs

FROM sys.databases D

WHERE owner_sid <> '0x01'

/******************************

** GET COUNT OF ALL TABLES **

** FOR NON-SYSTEM DATABASES **

******************************/

DECLARE @CountTable AS TABLE (DB VARCHAR(100),CNT INT)

DECLARE @SQL VARCHAR(MAX)

DECLARE @DBName VARCHAR(128)

SET @DBName = ''

WHILE @DBName < (

SELECT MIN(name)

FROM sys.databases

WHERE name > @DBName

AND name NOT IN ('master','model','msdb','tempdb')

)

BEGIN

SELECT @DBName = MIN(NAME) FROM sys.databases WHERE name > @DBName AND NAME NOT IN ('master','model','msdb','tempdb')

SET @SQL = '

USE [' + @DBName + ']

SELECT ''' + @DBName + ''' AS DBName

,COUNT(1) AS Cnt

FROM sys.tables

'

INSERT INTO @CountTable

EXEC (@SQL)

END

INSERT INTO @CountAll

SELECT 'TotalTables',SUM(CNT) AS TotalTables

FROM @CountTable

/*******************************

** GET COUNT OF ALL INDEXES **

** FOR NON-SYSTEM DATABASES **

*******************************/

DECLARE @IndexTable AS TABLE (DB VARCHAR(100),TableName VARCHAR(100), CNT INT)

SET @DBName = ''

WHILE @DBName < (

SELECT MIN(name)

FROM sys.databases

WHERE name > @DBName

AND name NOT IN ('master','model','msdb','tempdb')

)

BEGIN

SELECT @DBName = MIN(NAME) FROM sys.databases WHERE name > @DBName AND NAME NOT IN ('master','model','msdb','tempdb')

SET @SQL = '

USE [' + @DBName + ']

SELECT ''' + @DBName + ''' AS DBName

,OBJECT_NAME(I.object_id) AS TableName

, COUNT(1) AS Cnt

FROM sys.indexes I

JOIN sys.tables T ON I.object_id = T.object_id

WHERE I.name IS NOT NULL

GROUP BY OBJECT_NAME(I.object_id)

'

--PRINT @SQL

INSERT INTO @IndexTable

EXEC (@SQL)

END

INSERT INTO @CountAll

SELECT 'TotalIndexes',SUM(CNT) AS TotalIndexes

FROM @IndexTable

SELECT *

FROM @CountAll

Thursday, July 8, 2010

Log Shipping error

I had a problem where I noticed on my primary log shipping machine (the one the data originates on) that the LS_Alerts job was failing. It said that a new database we were working on was failing. I also noticed that I had two jobs that didn't belong on that machine..I had a LS_Copy and a LS_Restore...which should have been on the secondary machine. I believe that another admin set it up from the development machine during the initial phase for some reason, but I couldn't find a source machine. I delete the two jobs from the Job manager, but the Alert job still showed failures when run. I decided to figure out what was the problem, and this is what I did to look into it:

I saw that the alert job ran the proc sys.sp_check_log_shipping_monitor_alert, so I opened up the proc to see what it was doing. It calls the following:

and raises an error if there are records.

When I ran it on my primary machine the second statement (from log_shipping_monitor_secondary) had a record in it; but the odd thing was that when I just did a select * against the table, I saw that the primary server for that statement was the same server as the secondary server...so it was set to logship to its self. From that point, it was simple, delete the record and the error went away.

Hope that helps someone in the future.

I saw that the alert job ran the proc sys.sp_check_log_shipping_monitor_alert, so I opened up the proc to see what it was doing. It calls the following:

(select primary_server

,primary_database

,isnull(threshold_alert, 14420)

,backup_threshold

,datediff(minute, last_backup_date_utc, @curdate_utc)

,cast(0 as int)

from msdb.dbo.log_shipping_monitor_primary

where threshold_alert_enabled = 1

and datediff(minute, last_backup_date_utc, @curdate_utc) > backup_threshold)

union

(select secondary_server

,secondary_database

,isnull(threshold_alert, 14421)

,restore_threshold

,datediff(minute, last_restored_date_utc, @curdate_utc)

,isnull(last_restored_latency,0)

from msdb.dbo.log_shipping_monitor_secondary

where threshold_alert_enabled = 1

and (datediff(minute, last_restored_date_utc, @curdate_utc) > restore_threshold

or last_restored_latency > restore_threshold))

and raises an error if there are records.

When I ran it on my primary machine the second statement (from log_shipping_monitor_secondary) had a record in it; but the odd thing was that when I just did a select * against the table, I saw that the primary server for that statement was the same server as the secondary server...so it was set to logship to its self. From that point, it was simple, delete the record and the error went away.

Hope that helps someone in the future.

Labels:

Log Shipping,

SQL,

SQL Server 2008,

SS2008,

TSQL

Subscribe to:

Posts (Atom)